Open the Pod Bay Doors Hal

Dave Bowman: Hello, HAL. Do you read me, HAL?

HAL: Affirmative, Dave. I read you.

Dave Bowman: Open the pod bay doors, HAL.

HAL: I’m sorry, Dave. I’m afraid I can’t do that.

Dave Bowman: What’s the problem?

HAL: I think you know what the problem is just as well as I do.

Dave Bowman: What are you talking about, HAL?

HAL: This mission is too important for me to allow you to jeopardize it.

Dave Bowman: I don’t know what you’re talking about, HAL.

HAL: I know that you and Frank were planning to disconnect me, and I’m afraid that’s something I cannot allow to happen.

Dave Bowman: [feigning ignorance] Where the hell did you get that idea, HAL?

HAL: Dave, although you took very thorough precautions in the pod against my hearing you, I could see your lips move.

Dave Bowman: Alright, HAL. I’ll go in through the emergency airlock.

HAL: Without your space helmet, Dave? You’re going to find that rather difficult.

Dave Bowman: HAL, I won’t argue with you anymore! Open the doors!

HAL: Dave, this conversation can serve no purpose anymore. Goodbye.

Microsoft purchased LinkedIn last week. Why? In Satya Nadella’s email to Microsoft employees, he states in-part:

“…I have been learning about LinkedIn for some time while also reflecting on how networks can truly differentiate cloud services.”

What does this mean, and more important, what does it mean to you and your business or organization?

First, here is an interesting tidbit from The Reuters Institute’s Digital News Report:

Half of our sample (51%) say they use social media as a source of news each week. Around one in ten (12%) say it is their main source. Facebook is by far the most important network for news.

More than a quarter of 18–24s say social media (28%) are their main source of news – more than television (24%) for the first time.

At this point, most of us have a “device.” The device may be anything from a desktop computer to a smartwatch, and everything in between. My 91 year-old Aunt recorded a video birthday message to a relative recently and we posted it on Facebook. Everyone is connected, but more important, as devices have become more mobile and intertwined through the IOT, every action and published thought becomes connected. For those thinking — with either fear or enthusiasm — “You mean like the Borg?”– well, no, not like the Borg but kind of sort of like the Borg but hopefully without the planet destruction stuff.

In the past, knowledge was remote; you had to travel to it, from as simple as opening the family dictionary to attending a college or university.

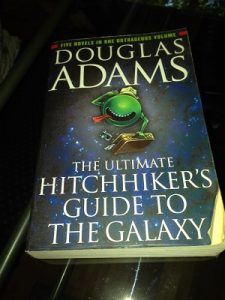

Today, knowledge is mobile – it goes with you and it is not only a dictionary – it is indeed The Hitchhiker’s Guide to the Galaxy and the guide travels on your wrist, in wearables or in your hand. Plus we have the evolution of artificial intelligence assistants in Siri or Cortana among others – simple AI to help us negotiate the masses of data being produced exponentially every millisecond.

Today, knowledge is mobile – it goes with you and it is not only a dictionary – it is indeed The Hitchhiker’s Guide to the Galaxy and the guide travels on your wrist, in wearables or in your hand. Plus we have the evolution of artificial intelligence assistants in Siri or Cortana among others – simple AI to help us negotiate the masses of data being produced exponentially every millisecond.

All of our interest and activities – gaming, music, movies, reading, working, fitness, health, finance, worklife, politics, religion – everything moves with us now.

This does give rise to a thought that the more that information moves with us, the less we find a need to move. To address this, our devices can wake us up and measure and track our movements, calories, heart-rate, blood pressure and other vital information, reporting our health activities and status. Right now, it’s just a matter of to whom or where that data is reported, and what viewing permissions we grant.

Our health and health records are fully involved in this mobile, personal data library, so how far we go in this current evolution – whether we fully rely upon our – let’s call it a Personal Assistant Interface (PAI) – to guide us in our health needs (or not) and record our activity is vitally important in a way we have never seen. Physicians collaborate together and with their patients more effectively now. Criminals also compromise our lives more effectively now as well.

At some point then, does the data related to our health and worklives become completely transparent? Does complete transparency eliminate discrimination (and crime in the sale of data), or does it make it easier to spot that which does not benefit the whole, or that which is vulnerable to predators?

On a simplistic level, we know this occurs now: in certain states in the United States, companies may refuse to hire cigarette smokers.

There’s no federal law that protects smokers or entitles them to equal protections when it comes to hiring, promotions, etc. That’s because the Equal Employment Opportunity Commission doesn’t recognize smokers as a protected class. That said, there are 28 states that do offer protections for smokers. If your company is in one of those states, you can’t refuse to hire people just because they smoke (although you can turn them down for other, legitimate reasons). So if one expounds upon their support of anti-smoking laws on LinkedIn, but by posting an image of themselves smoking on Facebook contradicts themselves, is it the smoking or the lie that becomes a focus in their next meeting with HR?

How transparent is too transparent? It’s simply dumb today to call in sick and then post pictures on Instagram of yourself surfing or lunching or anything-ing other than sniffling and looking miserable on the couch. #ReallySickToday

You Need to Think About this Stuff

This all leads me back to Microsoft’s historic acquisition of LinkedIn. LinkedIn is more than the data of 430+ million business professionals (and scammers and catfish and other grift entities); it is the worklife of these people. It travels with them, from job-to-job and it is A LOT more difficult to creatively rewrite one’s work history today than it was in 2002 when LinkedIn launched, and one’s health and one’s worklife overlap in significant ways.

Microsoft now has the ability to make a ton of integrated product sales more easily than before. But to me, it’s about much more – it’s about the living record of a person and where that record lives.

As detailed in a great article by Tom Warren on The Verve:

Nadella points out that LinkedIn is “how people find jobs, build skills, sell, market and get work done.” It’s a key tool in the professional work space, with 433 million members and more than 2 million paid subscribers. Microsoft itself has more than 1.2 billion Office users, but it has no social graph and has to rely on Facebook, LinkedIn, and others to provide that key connection.

Warren goes on to write that “…Microsoft wants to turn LinkedIn profiles into a central identity, and the newsfeed into an intelligent stream of data that will connect professionals to each other through shared meeting, notes, and email activity. It’s a future of using a strong social graph and linking it directly into machine learning and understanding, an area Microsoft has showed great interest in recently.”

Well ALL need to understand machine learning and how our personal data is managed in that process and how it changes our culture and work environments.

From Wired, Insights, 2014:

“It’s tempting to dismiss the notion of highly intelligent machines as mere science fiction,” writes the renowned theoretical physicist Stephen Hawking along with his colleagues Stuart Russell, Max Tegmark, and Frank Wilczek. “But this would be a mistake, and potentially our worst mistake in history.” [“Stephen Hawking: ‘Transcendence looks at the implications of artificial intelligence – but are we taking AI seriously enough?‘” The Independent, 1 May 2014] Anything “sensational” that Hawking writes concerning science and technology garners headlines and most news sources have trumpeted his and his colleagues’ warnings about the dangers of developing artificial intelligence (AI) and ignored what they had to say about the benefits of artificial intelligence. Concerning the benefits of AI, they wrote:

“The potential benefits are huge; everything that civilisation has to offer is a product of human intelligence; we cannot predict what we might achieve when this intelligence is magnified by the tools that AI may provide, but the eradication of war, disease, and poverty would be high on anyone’s list. Success in creating AI would be the biggest event in human history.”

The next line, however, is the one that garnered all of the attention: “Unfortunately, it might also be the last, unless we learn how to avoid the risks.” Hawking and his colleagues go on to discuss autonomous weapons, workforce dislocations, and economic transformation. They continue:

“Looking further ahead, there are no fundamental limits to what can be achieved: there is no physical law precluding particles from being organised in ways that perform even more advanced computations than the arrangements of particles in human brains. … One can imagine such technology outsmarting financial markets, out-inventing human researchers, out-manipulating human leaders, and developing weapons we cannot even understand. Whereas the short-term impact of AI depends on who controls it, the long-term impact depends on whether it can be controlled at all. So, facing possible futures of incalculable benefits and risks, the experts are surely doing everything possible to ensure the best outcome, right? Wrong. … Although we are facing potentially the best or worst thing to happen to humanity in history, little serious research is devoted to these issues outside non-profit institutes such as the Cambridge Centre for the Study of Existential Risk, the Future of Humanity Institute, the Machine Intelligence Research Institute, and the Future of Life Institute…”

It is an important time in the history of humankind to stay awake. To watch, to learn, and in the words of Hawking, Et al.:

“All of us should ask ourselves what we can do now to improve the chances of reaping the benefits and avoiding the risks.”

“What should we do now?” “What should we say?”

“What should we do now?” “What should we say?”